Service providers (and enterprises) focused on improving quality of experience (QoE) need better tools with new levels of insight.

Some things get simpler as time goes on. Managing communications networks is not currently one of those things. With cloud, software as-a-service, analytics, and big data now hitting mainstream status, these networks are becoming faster, but the path taken by service and application traffic between the service provider and the user becomes increasingly winding, unpredictable, and harder to control.

Applications are growing in number and diversity. Traffic is becoming more dense. Subscribers rely on networks in increasingly dynamic ways at the same time as their expectation of service quality also grows.

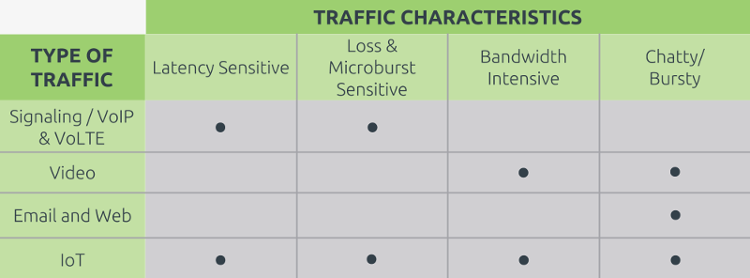

This creates something of a quandary for service providers. The crux of their challenges lies with the fact that traffic for different applications behaves differently; application requirements vary significantly. The table below illustrates this for just a few application examples.

How can a service provider keep all these applications running smoothly, and their users happy, when there is such variety of traffic characteristics? Clearly, service providers (and enterprises) need better tools with new levels of insight. Legacy network and service performance management solutions are no longer effective.

Something Old, Something New

Traditional management tools are typically feature distributed, probe-centric architecture, designed to use SNMP and CLI protocols for monitoring network components like routers, firewalls, switches, and load balancers; and to monitor and analyze bandwidth (traffic) performance and utilization through protocols like NetFlow, sFlow, jFlow, and IP FIX.

But, these legacy protocols do not provide a complete index for measuring what really matters to users, and by extension to the competitive success of service providers. For example, SNMP extrapolates performance of individual devices only (not end-to-end) using periodically collected data.

New Monitoring and Assurance Requirements

For complete, end-to-end visibility into and control over both network performance and user experience, service providers must use instrumentation that combines two types of monitoring:

Active monitoring performs end-to-end monitoring between nodes. It relies on probes injected into the network, with a large number of test packets recorded to calculate key performance indicators (KPIs) like packet loss and latency. Two-Way Active Measurement Protocol (discussed in more detail below) plays an important role in traffic simulation.

Passive monitoring, which is

- Microscopic in that it analyzes every packet through a measurement node (for example, a switch or router), helps providers understand traffic characteristics, and enhances forensics and troubleshooting.

- Macroscopic in that it monitors overall traffic flows and is used for traffic engineering.

TWAMP for the Win

Effective performance assurance for modern communications networks must support TWAMP (IETF RFC 5357), an open protocol used to set up and execute measurement sessions between two devices. Why TWAMP? It:

- Uses active probe packets to measure two-way delay between endpoints in IP networks.

- Allows timestamps to be applied for accuracy.

- Typically does not require both endpoints to be time-synchronized.

- Measures a fairly wide range of KPIs, including packet loss, and one-way and two-way packet delay/variation.

Bring it All Together

As communications networks continue to evolve and become more complex, interdependencies between metrics cause new forms of instability, resulting in the need for deeper analysis to understand how short-term events can have a large impact. That analysis is possible by using instrumentation that delivers a new level of visibility, in order to:

- Detect and isolate quality of service (QoS) and quality of experience (QoE) issues with exceptional speed

- Discover unexpected KPI trends and relationships

- Uncover ‘invisible’ QoE impairments, aka “ghosts in the network”

- Correlate metrics to isolate the root cause of performance issues

To achieve all this, service providers are deploying solutions like Accedian’s SkyLIGHT—which provides granular, accurate insight across the entire network—to quickly identify and remediate performance problems, often before they become an issue for end users. Likewise, enterprises today use diversified, distributed applications—spread across public cloud, SaaS, and private data centers. Therefore, network performance and assurance is also key for enterprises, to ensure optimal application performance and user experience.

Improved delivery, better visibility: How Accedian and VMware are working together to help CSPs navigate the 5G world

Improved delivery, better visibility: How Accedian and VMware are working together to help CSPs navigate the 5G world

Adding a new dimension of visibility to the Cisco Full-Stack Observability portfolio with Accedian Skylight

Adding a new dimension of visibility to the Cisco Full-Stack Observability portfolio with Accedian Skylight